Mind the Gap: Data They Share May Not Be Data You Can Use

(Links updated September 15, 2014)

In his closing remarks at the 9th International Digital Curation Conference in San Francisco, Cliff Lynch approvingly observed a theme in this year’s conference: more focus on the challenges of data reuse. Among several speakers who focused on data reuse, Brian Hole from Ubiquity Press, talked about the mission to drive reuse as much as possible, Atul Butte, demonstrated the extraordinary benefits of using publicly available data, Eric Kansa, OpenContext, talked about providing evidence of data reuse as an incentive for original researchers to share data (and received best research paper award), and Jillian Wallis, UCLA, spoke about the benefits of data producers working with data reusers. The focus on data reuse is very encouraging. Data use, after all, is the raison d'être of data sharing. What Lynch is saying, I think, is that setting our sight on data use and reuse adds a key dimension to the work of this community – traditionally concerned with the data creator-curator relationship – and presents it with important challenges and opportunities.

As we continue to work to facilitate data sharing and preservation, we must also work on ways to ensure that data are prepared for use and can be interpretable for years to come. This was also the message of a paper I presented at this conference, with coauthors Ann Green and Libbie Stephenson (slides). The main argument of the paper is that (a) sharing data is necessary but not sufficient for future reuse, (b) ensuring that data is “independently understandable” is crucial, and (c) incorporating a data review process is feasible.

I’ll briefly explain each.

(a) sharing data is necessary but not sufficient for future reuse

The increase in available data, in repositories and other services that provide access to data, is a response to requirements by funders, governments, and institutions, and a reflection of changing cultural norms about open science and scholarly communication. The idea that the data will be used by unspecified people, in unspecified ways, at unspecified time fuels this open data engine, and is thought to have broad benefits (e.g., Piwowar & Vision, and lists by UKDA, DataOne). As data become increasingly available through a variety of mechanisms, there is a growing expectation that the data stand alone; that they can be interpretable and usable from the get-go.

Yet, as anyone who has tried knows, using someone else’s data is not always possible. Assuming, for a minute, that there are no barriers to the data in terms of access or sensitive information, it is often difficult to interpret and make use of the data – it’s difficult when there is only minimal descriptive information; when variable labels are incomprehensible; when you don’t understand how the data were generated. We could find ourselves with a proliferation of research products we can’t really use.

(b) ensuring that data is “independently understandable” is crucial

What if the yardstick for all shared data is that they be “independently understandable for informed reuse”? Well, it is. An OAIS recommended practice is that information be “independently understandable to (and usable by) the Designated Community,” and that there is enough information to be understood “without needing the assistance of the experts who produced the information.” The Royal Society calls for “intelligent openness,” which essentially means the same thing. Gary King, back in 1995, defined the replication standard as, “sufficient information… with which to understand, evaluate, and build upon a prior work.” And Victoria Stodden argues that reproducible research requires such curation of data and code.

This seems elementary. So, how do we make sure data and related research products (e.g., code, algorithms, code books, software) are up to par in this regard? Who is doing this now?

(c) incorporating a data review process is feasible

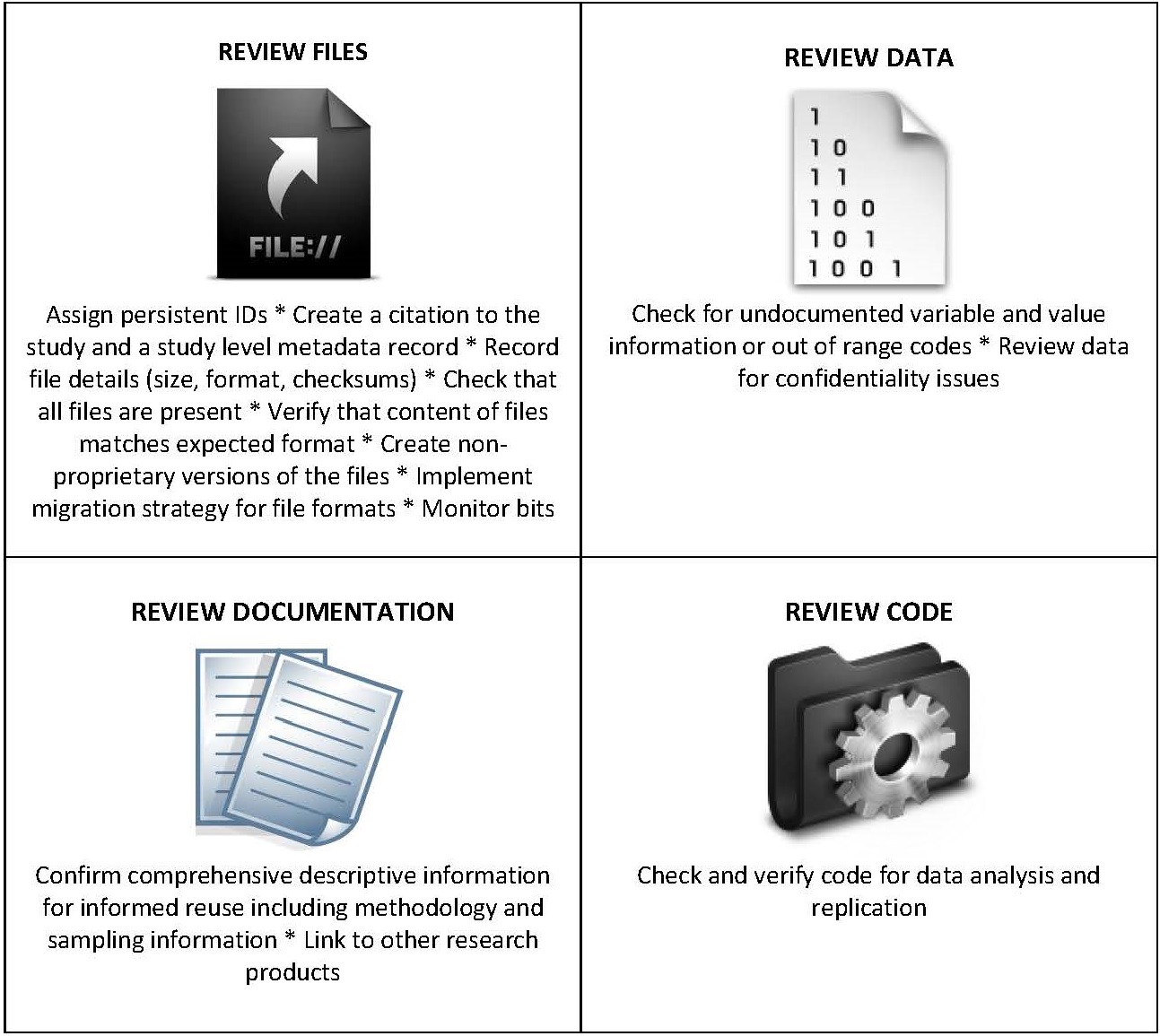

The paper describes the work that data archives do. In a nutshell, they engage in “data quality review,” which includes review of the files, documentation, data, and code before they are made publicly available.

Data archives such as ICPSR have been around for a long time, and their curatorial and preservation mission led them to develop tools and processes to review files before making them available and to take certain actions when the files are found lacking. Data pass through a "pipeline" for processing and enhancement, which includes a review of data and documentation for completeness and format. If necessary, staff recode variables to address confidentiality issues, check for undocumented or out of range codes, and standardize missing values. Staff might also enhance study documentation to ensure that question text, labels, and response categories and value labels are associated with variables. Other social science data archives such as Yale’s ISPS Data Archive and the UCLA SSDA model their curatorial workflow after the ICPSR (ISPS, with Innovations for Poverty Action, is currently developing an open source integrated system for curation).

Data archives such as ICPSR have been around for a long time, and their curatorial and preservation mission led them to develop tools and processes to review files before making them available and to take certain actions when the files are found lacking. Data pass through a "pipeline" for processing and enhancement, which includes a review of data and documentation for completeness and format. If necessary, staff recode variables to address confidentiality issues, check for undocumented or out of range codes, and standardize missing values. Staff might also enhance study documentation to ensure that question text, labels, and response categories and value labels are associated with variables. Other social science data archives such as Yale’s ISPS Data Archive and the UCLA SSDA model their curatorial workflow after the ICPSR (ISPS, with Innovations for Poverty Action, is currently developing an open source integrated system for curation).

While data archives may have a special responsibility to ensure the quality of the data they hold, others in the research ecosystem can, and should, make important contributions.

While data archives may have a special responsibility to ensure the quality of the data they hold, others in the research ecosystem can, and should, make important contributions.

Our paper examines other stakeholders who have the potential to ensure shared data could be reused in the long term: other data repositories, libraries and institutional repositories, scholarly journals, and researchers themselves (see right sidebar).

At one of the sessions at IDCC, a comment was made that data are abundant, but reusable data are scarce. I’m optimistic that there is good potential to address the problem of data that aren’t fit for reuse. No one stakeholder holds the key, and it will take a collaborative effort by the entire community, informed by the disciplines. But the first step is to acknowledge this is a problem.